EmailMarketingZone.es · April 2026 · 8 min read

A/B testing is the closest thing email marketing has to a superpower. It replaces opinion with evidence. It settles debates that could otherwise consume hours of a team’s energy. And when done consistently over time, it transforms a marketing program from a series of educated guesses into a precision instrument calibrated by real data from real subscribers behaving in real conditions.

This guide covers everything you need to run A/B tests that actually improve your email performance.

What Is A/B Testing in Email Marketing?

An A/B test sends two versions of an email to different segments of your audience simultaneously — one version to group A, one version to group B — with a single variable changed between them. After a defined period, you compare the performance of both versions and implement the winner across the rest of your list or in all future campaigns. The key principle: only one variable should differ between the two versions. Change two things simultaneously and you cannot know which caused the difference in results.

What to Test First

Not all tests are equally valuable. The elements that typically have the highest impact on performance — and should therefore be tested first — are your subject line, your call to action, your offer or incentive, your email length, and your send time. Secondary elements worth testing after the high-impact variables are optimized include the sender name (your name versus your company name), the use of images versus text-only, button color and text, preview text, and personalization versus no personalization.

How to Run a Statistically Valid Test

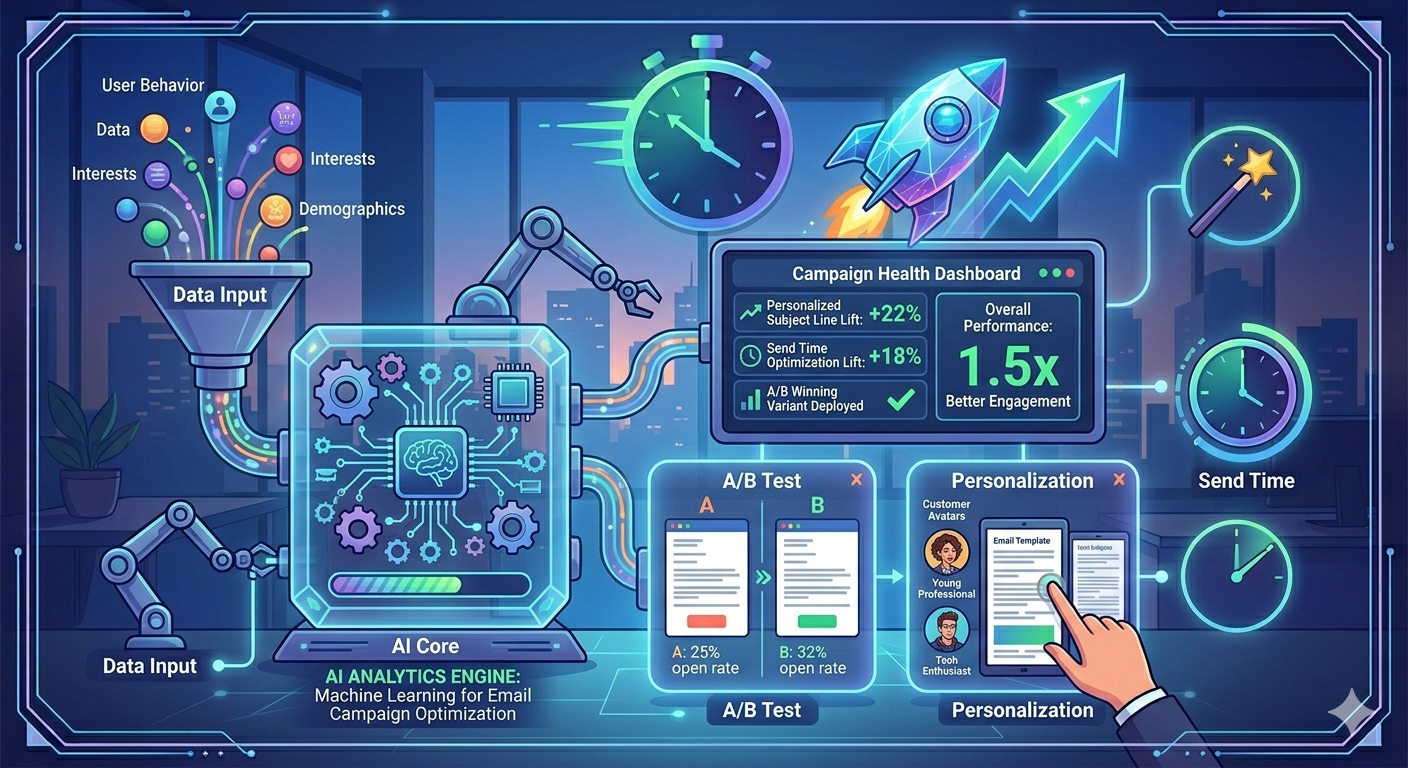

The most common A/B testing mistake is drawing conclusions from insufficient data. A test that runs for one hour on 200 subscribers is not a valid test — it is anecdote. For statistically meaningful results, you need a large enough sample size and a long enough testing window. As a rough guide, each variant should be sent to at least 500 to 1,000 subscribers, and the test should run for at least 24 hours to account for time-of-day variations in engagement behavior. Most email platforms include built-in statistical confidence indicators — do not implement a winner until confidence exceeds 95%.

Choosing the Right Success Metric

Different tests should be measured by different metrics. Subject line tests should be judged by open rate. CTA tests should be judged by click-to-open rate (CTOR). Offer tests should be judged by conversion rate or revenue per email. Using the wrong metric for the wrong test produces misleading results — a subject line might increase opens while decreasing conversions if it promises something the email does not deliver. Always align your success metric to the specific goal of the element you are testing.

Building a Testing Calendar

The difference between marketers who benefit enormously from A/B testing and those who treat it as an occasional experiment is consistency. Build a testing calendar that includes at least one A/B test per month — ideally one per significant campaign. Document every test: what you tested, the hypothesis, the result, and what you changed as a result. This documentation becomes your email program’s institutional knowledge — a compounding asset that grows more valuable over time.

Advanced Testing: Multi-Variate and Sequence Testing

Once basic A/B testing is embedded in your workflow, multi-variate testing allows you to test multiple variables simultaneously with a statistically sound methodology — though it requires larger audience sizes to generate valid results. Sequence testing — testing the order and timing of emails within an automation flow — is an advanced technique that can dramatically improve the performance of welcome sequences, nurture flows, and re-engagement campaigns. These techniques require more technical sophistication but generate disproportionately large performance improvements.

The biggest A/B testing mistake: Stopping the test early when one variant is ahead. Early results are often misleading. Always run tests to the planned endpoint and the target sample size before making decisions. Premature winners frequently become losers when the full data arrives.

Test, Learn, Improve — Forever

There is no point at which your email program is so well-optimized that A/B testing becomes unnecessary. Subscriber behavior changes. Market conditions shift. New segments join your list with different preferences. The marketers who continue testing consistently — even when results are already strong — are the ones who maintain their edge as everything around them evolves. Make testing a habit, not an event.